A language model that can predict missing words from Shakespearean sonnets, a mixed reality escape room in Hackerman Hall, a smart assistant for the visually impaired—these are only a small sample of the coding capabilities explored in the final projects of the spring semester’s upper-level computer science courses. Students presented their findings as business pitches, live demonstrations, and poster sessions; learn more about their research projects below.

Machine Learning: Deep Learning

Deep learning—a subset of machine learning in which computer algorithms learn to perform specific tasks by analyzing large amounts of data—has recently emerged as a powerful tool, with applications in virtual assistance, medical image analysis, and visual art processing. In the course Machine Learning: Deep Learning, graduate and undergraduate students study the basic concepts of deep learning and explore its applications in modern artificial intelligence tasks before developing their own AI-powered technologies to solve real-world problems.

Visitors explore the Spring 2023 Deep Learning poster session.

33 student teams presented their final projects as business pitches and poster presentations on May 10. Teams trained a model to predict protein interactions for medical research and built an action recognition system to identify human movement from static images, but the overwhelming majority of projects involved large language models—such as the aptly named “ShakesBERT,” which successfully predicts missing words from Shakespearean sonnets using Google’s open-source Bidirectional Encoder Representations from Transformers pre-training technique.

“This comes as no surprise, as ChatGPT and open-access competitors dominate AI reporting right now,” says course instructor Mathias Unberath, an assistant professor of computer science and a member of the university’s Laboratory for Computational Sensing and Robotics and Malone Center for Engineering in Healthcare. “But language modeling or not, it’s always exhilarating to see the creativity and commitment that students demonstrate with their final projects—and this year was definitely no exception. I’m particularly grateful to Intuitive Surgical for their continued partnership, which allows us to offer Best Project Awards.”

As in the past, the surgical robotics manufacturer gave out $1200 in prize money this year: two $400 Best Project awards—won by “Transformer-Based Segmentation for Robotic Surgery” and “ShakesBERT”—and two $200 Runner-Up awards, presented to “Fine-Tuning BERT on Measuring Linguistic Uncertainty” and “Cross-Domain Translation Between Single-Cell Imaging and Spatial Transcriptomatics.”

Augmented Reality

Augmented reality combines computer-generated content with the real world, melding physical and virtual realities for a truly interactive experience. Common applications include Snapchat, Pokémon Go, and live language translation, but AR is also used in healthcare, education, and fitness.

Taught by Ehsan Azimi, an assistant research professor of computer science and an affiliate of the Malone Center, JHU’s Augmented Reality course introduces students to the field of AR, its applications in medicine, and relevant technological considerations. Students then spend the final weeks of the semester working in teams to develop their own AR applications.

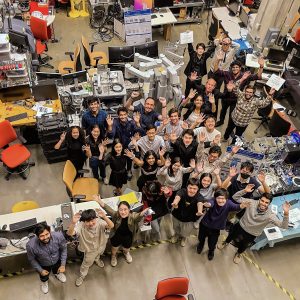

Spring 2023 Augmented Reality students celebrate the end of the semester.

Ten student teams demonstrated their final projects on May 10; projects included several medical applications, an immersive meditation experience, and even an educational mixed reality escape room that teaches players American Sign Language.

The live demonstrations “showcased the students’ creativity and technical skills,” Azimi says. “The projects this semester encompassed the entire spectrum of augmented, virtual, and mixed reality.”

In fact, the wide range of final projects contributed to his decision to not designate best project awards this year.

“Each project possessed unique qualities and characteristics that made them incomparable, much like comparing apples and oranges,” he explains. “I believe it would have been unfair to compare and rank them. However, we did give all the teams successful completion certificates for the great work they did. Their hard work and dedication paid off, and they should be proud of their impressive demos.”

Natural Language Processing: Self-Supervised Models

Self-supervised learning is a machine learning paradigm in which a model pre-trains itself on unlabeled data before moving on to analyze task information and draw conclusions. It is also the same foundational technology used by OpenAI’s GPT-3 language model.

“Self-supervised language models have been the subject of intense public discussion as of late,” says course instructor Daniel Khashabi, an assistant professor of computer science and a member of the Center for Language and Speech Processing. He taught Natural Language Processing: Self-Supervised Models this semester with the goal of giving students a comprehensive understanding of self-supervised learning techniques for NLP applications, while also providing “a broader perspective on the changing landscape of AI, which is becoming more and more prevalent in our daily lives.”

The students of Spring 2023 Natural Language Processing: Self-Supervised Models show off their posters.

Students learned the necessary skills to design, implement, and understand their own self-supervised neural network models, and 23 teams presented their findings in a final project poster session on May 15. Projects ranged from an adaptive paraphrasing engine to a smart assistant designed to aid the visually impaired by answering questions about a user’s surroundings as captured via smartphone camera.

A Best Project Award was given to “Efficient Distillation of Transformers via Self-Teaching”—an algorithm that effectively distills large language models into smaller ones with minimal computational needs—while “SEEK: Stacked Models for Expert Knowledge; Efficient Knowledgeable”—a highly efficient language model comprised of individual “experts,” where each expert specializes in a specific domain with exceptional quality—was voted “Most Popular.”

This was Khashabi’s first time teaching an undergraduate course at Hopkins. “It was a rewarding experience for me as a teacher to see my students engage with the material and come away with valuable knowledge that they can apply in their future studies and careers,” he says.