A graph neural network (GNN) developed by computer scientists at the Johns Hopkins University could transform the computational recognition and translation of signed languages, particularly American Sign Language (ASL). The team’s findings have the potential to provide researchers with new insights into how people use sign languages and to pave the way for enhanced linguistic analysis and better communication technologies for the Deaf and hard-of-hearing communities.

Third-year undergraduate student Alessa Carbo was the primary author of the study, which was recently published in the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing. She worked on the project with Eric Nalisnick, an assistant professor of computer science and a member of the Johns Hopkins Data Science and AI Institute.

The duo’s work is an important step towards tackling ASL handshape recognition. Handshapes—distinctive configurations that the hands take as they are used to form signs—are essentially the building blocks of any sign language. But despite their linguistic importance, many AI models for sign language processing rarely model handshapes explicitly.

The study built upon previous work by Johns Hopkins computer scientists Xuang Zhang, Engr ’23 (PhD) and Kevin Duh, who demonstrated that explicitly modeling handshapes can benefit downstream processing tasks—such as improving ASL translation accuracy by 15%. Inspired by this statistic, Carbo and Nalisnick wanted to see if they could improve this result by separating static hand orientation for a single shape from how a signer’s hands move over time in a sequence.

The same handshape can appear in many, many different configurations within even a single video, making handshape detection a complex problem.

“It’s quite challenging because a sign could comprise multiple handshapes (e.g., “homework” is a compound sign that is made from the signs “home” and “work,” which each have a different handshape) and handshapes evolve and change during the production of a sign (e.g., transitioning between handshapes),” Nalisnick writes.

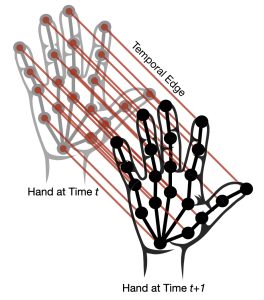

Spatial-temporal keypoints on the hand: This visualization shows the keypoints that form the spatial representation of the hand. Anatomical edges are shown in black, temporal edges (across frames) are shown in red.

This is why he and Carbo landed on using a GNN model divided into two sub-models: One looks at how the hand’s shape changes over time within a single sign, while the other searches the video for the frames that best show a handshape in its most recognizable orientation.

Their approach better captures the natural structure of sign language, particularly for subtle distinctions that depend on both spatial and temporal features, the researchers say. Testing it on a new dataset of annotated ASL videos, they found that this separation of time dynamics and hand configuration improves handshape recognition significantly.

“And because handshape is a fundamental parameter of all sign languages, we believe our model can be a useful tool for sign language linguists,” Carbo says. “Say a linguist has a large collection of videos of people signing, and she wants to find all the occurrences of a particular handshape. Our model could allow her to quickly and automatically find those video segments.”

One hurdle the researchers currently face is the lack of better annotated data.

“For example, even if we have a video of someone signing and know which handshapes will appear in the video, without annotations that label the specific time points that the given handshape is visible, our model has to learn that on its own, which is challenging,” Nalisnick says.

This hurdle is even bigger for sign languages with fewer fluent signers; for example, Auslan, the sign language used in Australia, and Dutch Sign language each have fewer than 15,000 native signers, as compared to ASL’s roughly 450,000 native signers—meaning there simply aren’t enough videos out there to train AI models to recognize and translate these sign languages.

“Yet these sign languages do have linguistic commonalities, such as shared handshapes, so we’re hoping our model can be used to help with transfer learning—that is, when an AI model can learn from one dataset (like ASL) in a way that improves its understanding of a related, but distinct, dataset (like Dutch Sign Language),” says Nalisnick.

“We’re excited that our work could help with both AI applications as well as researchers trying to find new insights about how people use sign languages around the globe,” says Carbo.